GSoC 2024

Robotics applications are typically distributed, made up of a collection of concurrent asynchronous components which communicate using some middleware (ROS messages, DDS…). Building robotics applications is a complex task. Integrating existing nodes or libraries that provide already solved functionality, and using several tools may increase the software robustness and shorten the development time. JdeRobot provides several tools, libraries and reusable nodes. They have been written in C++, Python or JavaScript. They are ROS-friendly and full compatible with ROS-Noetic, ROS2-Humble (and Gazebo11).

Our community mainly works on four development areas:

-

Education in Robotics. RoboticsAcademy is our main project. It is a ROS-based framework to learn robotics and computer vision with drones, autonomous cars…. It is a collection of Python programmed exercises and challenges for engineering students.

-

Robot Programming Tools. For instance, BT-Studio, for robot programming with Behavior Trees; VisualCircuit for robot programming with connected blocks, as in electronic circuits, in a visual way.

-

Machine Learning in Robotics. For instance, the BehaviorMetrics tool for assessment of neural networks in end-to-end autonomous driving. RL-Studio, a library for the training of Reinforcement Learning algorithms for robot control. DetectionMetrics tool for evaluation of visual detection neural networks and algorithms.

-

Reconfigurable Computing in Robotics. FPGA-Robotics for programming robots with reconfigurable computing (FPGAs) using open tools as IceStudio and F4PGA. Verilog-based reusable blocks for robotics applications.

Selected contributors

In the year 2024, the contributors selected for the Google Summer of Code have been the following:

Zebin Huang

End-to-end autonomous vehicle driving based on text-based instructions: research project regarding Autonomous Driving + LLMs

Ideas list

This open source organization welcomes contributors in these topics:

Project #1: Robotics-Academy: migration to Gazebo Fortress

Brief explanation: Robotics-Academy is a framework for learning robotics and computer vision. It consists of a collection of robot programming exercises. The students have to code in Python the behavior of a given (either simulated or real) robot to fit some task related to robotics or computer vision. It uses standard middleware and libraries such as ROS or openCV.

Nowadays, Robotics Academy offers the student up to 26 exercises, and another 11 prototype exercises. All of them come ready to use in the RoboticsAcademy docker image (RADI). The only requirement for the students its to download the docker image, all the dependencies are installed inside the RADI.

The RADI is one key point of the platform and Project #1 aims to keep improving it. One main component of the RADI is Gazebo. Currently, the RADI is based in Gazebo11 version, which its end of life (in Sep, 2025) is getting closer. The main goal of the project is to migrate the RADI to Gazebo Fortress. This migration will take form by having a couple of exercises running in ROS2 with the new RADI.

- Skills required/preferred: Docker, Gazebo Fortress, Python and ROS/ROS2.

- Difficulty rating: medium

- Expected results: New docker image based on Gazebo Fortress.

- Expected size: 175h

- Mentors: Pedro Arias (pedro.ariasp AT upm.es) and Pawan Wadhwani (pawanw17 AT gmail.com)

Project #2: Robotics-Academy: CI & Testing

Brief explanation: Robotics-Academy is a framework for learning robotics and computer vision. It consists of a collection of robot programming exercises. The students have to code in Python the behavior of a given (either simulated or real) robot to fit some task related to robotics or computer vision.

Project #2 seeks to identify and address potential issues early on, fostering the maintainability and reliability of its software. This commitment to improving the quality of the software will be achieved through the implementation of automated testing and continuous integration (CI) processes. The project will also include the development of a testing strategy and the implementation of a CI pipeline.

- Skills required/preferred: Docker, GitHub actions, Python, JavaScript, pytest, Selenium

- Difficulty rating: medium

- Expected results: Tests on RAM, RI and RA

- Expected size: 350h

- Mentors: Pedro Arias (pedro.ariasp AT upm.es), Pawan Wadhwani (pawanw17 AT gmail.com) and Miguel Fernandez (miguel.fernandez.cortizas AT upm.es)

Project #3: Robotics-Academy: improve Deep Learning based exercises

Brief explanation: As of now, Robotics-Academy includes two exercises based on deep learning (DL): digit classification and human detection. These exercises allow users to submit a pre-trained DL model and benchmark its real-time performance in an easy and intuitive manner. Under the hood, we utilize ONNX, which supports various formats for submitting trained models.

The main objective of this project is to enhance the robustness of these exercises and standardize their appearance and functionality. To achieve this, the exercises’ frontend will be migrated to React, following the example of other exercises already functioning on our platform, such as Follow Line or Obstacle Avoidance. Additionally, we aim to briefly explore ONNX tools like model quantization or graph optimization to reduce the computational costs associated with running the exercises. Once we have addressed the issues related to robustness, appearance, and performance, we will update the documentation for all exercises, providing updated resources to guide students in their learning process.

- Skills required/preferred: React, Python, Deep Learning, Git

- Difficulty rating: easy

- Expected results: unified and robust deep learning exercises in Robotics-Academy

- Expected size: 90h

- Mentors: David Pascual (d.pascualhe AT gmail.com) and David Pérez (david.perez.saura AT upm.es)

Project #4: Robotics-Academy: new exercise using Deep Learning for Visual Control

Brief explanation: The goal of this project is to develop a new deep learning exercise focused on visual robotic control in the context of Robotics-Academy. We will create a web-based interface that allows users to input a trained model, which will take camera input from a drone or car and output the linear speed and angular velocity of the vehicle. The controlled robot and its environment will be simulated using Gazebo. The objectives of this project include:

- Updating the web interface to accept models trained with PyTorch/TensorFlow.

- Building new widgets to monitor exercise results.

- Preparing a simulated environment.

- Coding the core application that feeds input data to the trained model and returns the results.

- Training a naive model to demonstrate how the exercise can be solved.

This new exercise may utilize the infrastructure developed for the “Human detection” Deep Learning exercise. The following videos showcase one of our current web-based exercises and a visual control task solved using deep learning:

- Skills required/preferred: Python, Deep Learning, Gazebo, React, ROS2

- Difficulty rating: medium

- Expected results: a web-based exercise for robotic visual control using deep learning

- Expected size: 350h

- Mentors: David Pascual ( d.pascualhe AT gmail.com ) and Pankhuri Vanjani (pankhurivanjani AT gmail.com)

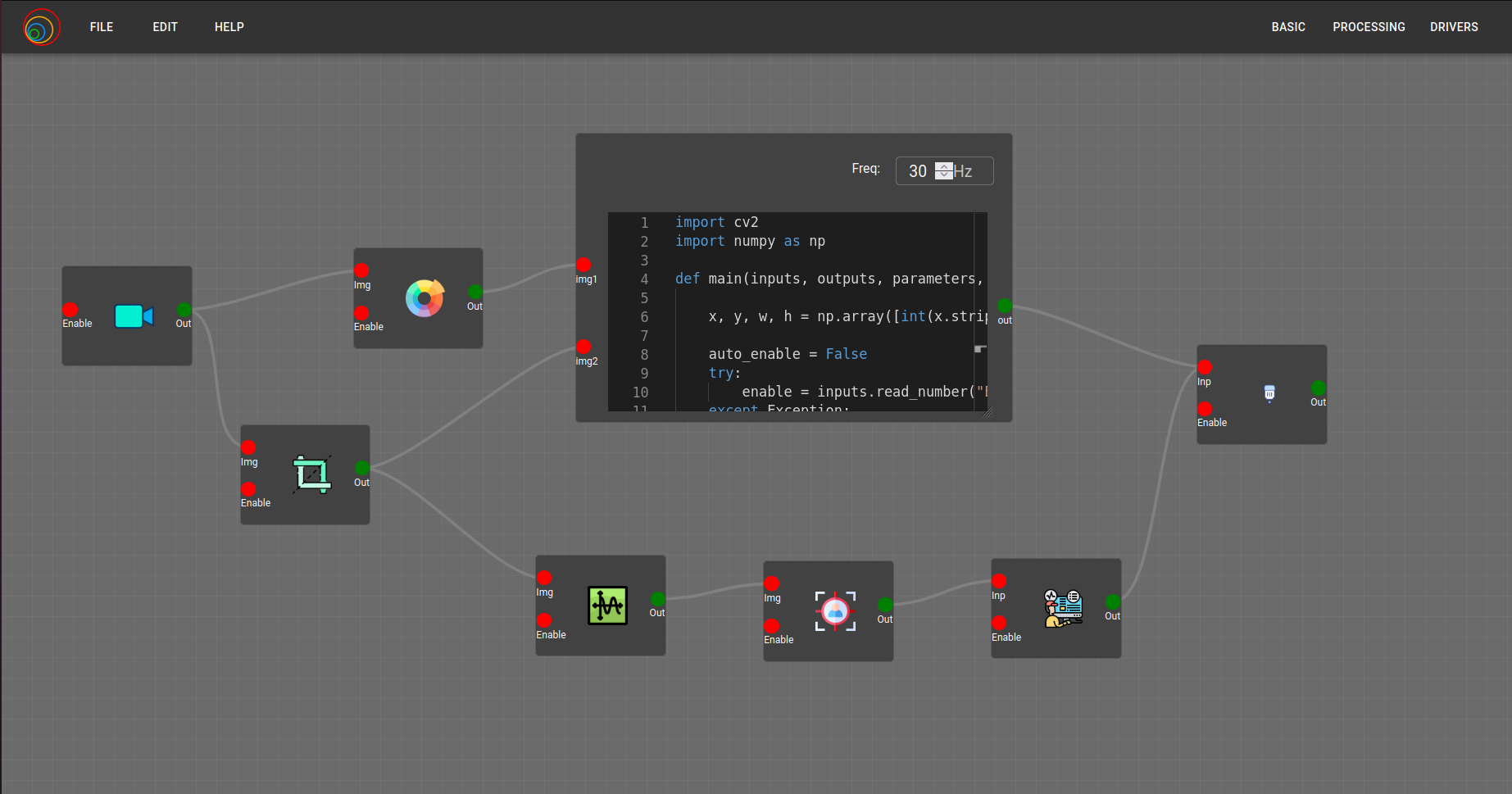

Project #5: VisualCircuit: block library

Brief explanation: VisualCircuit allows users to program robotic intelligence using a visual language which consists of blocks and wires, as electronic circuits. The last few years we focused on migrating the old POSIX IPC implementation to a Cross Platform compatible Python Shared Memory implementation. In addition, new functions for sharing data as well as application demos and functionality to support Finite State Machines were added. Currently, the process to build a Visual Circuit application is simple: Edit Application on the Web → Download Python Application File → Run Locally. After GSoC-2023 we also have a working prototype of dockerized execution of the robotics application, from the browser. You can read further about the tool on the website.

The aim for this year is to make Visual Circuit easy to host and deploy for a larger audience. We want to make the process of adding blocks to Visual Circuit easier by creating a GitHub Repo where people can contribute their designs, these designs will then be verified by maintainers and then merged. Achieving a wide block library will make the development of new robotics applications faster and safer, as they will greatly reuse tested blocks. In addition we wish to add autometed testing to VisualCircuit. To that end the contributor will be working with GitHub Actions to automate the deployment and testing of different parts of VisualCircuit.

- Skills required/preferred: ROS2, Gazebo, Python, GitHub

- Difficulty rating: medium

- Expected results: Block library for VisualCircuit, automated testing using GitHub Actions and example robotics applications developed with them.

- Expected size: 175h

- Mentors: José M. Cañas (josemaria.plaza AT gmail.com) and Toshan Luktuke (toshan1603 AT gmail.com)

Project #6: Robotics Academy: Exercise builder

- Brief explanation: The Exercise Builder is a user-friendly, Low Code/No Code solution designed to streamline the creation and publication of exercises on the Robotics Academy. This features empowers educators and developers to effortlessly generate engaging robotics exercises without the need for extensive coding expertise.

- Skills required/preferred: Docker, Django and React

- Difficulty rating: Medium

- Expected results: Create a new exercise using the Exercise Builder

- Expected size: 350h

- Repository Link - RoboticsAcademy, RoboticsApplicationManager, RoboticsInfrastructure

- Mentors: Apoorv Garg (apoorvgarg.ms AT gmail.com) and David Valladares (d.valladaresvigara AT gmail.com)

Project #7: Robotics Academy: improvement of Gazebo scenarios and robot models

Brief Explanation: Currently Robotics Academy offers the student up to 26 exercises, and another 11 prototype exercises. The main goal of this project is to improve the current Gazebo scenarios and robot models for many the exercises making them more appealing and realistic without increasing too much the required computing power for rendering. For instance the world model of the FollowLine exercise will be enhanced in order to have a 3D race circuit and several models will be reviewed to be low poly (= faster to simulate).

- Skills required/preferred: experience with Gazebo, SDF, URDF, ROS2 and Python

- Difficulty rating: easy

- Expected results: New Gazebo scenarios

- Expected size: small (~90h)

- Mentors: Pedro Arias (pedro.ariasp AT upm.es) and Shashwat Dalakoti (shash.dal623 AT gmail.com)

Project #8: Robotics Academy: improvement of autonomous driving exercises

Brief explanation: We have several exercises related to Autonomous Driving in our Robotics Academy: Autoparking, Road junction, Obstacle Avoidance, etc.. The main goal of this project is to update the Car Junction exercise, upgrading the car models, its template and its frontend in Robotics Academy. In addition, a new model of the autonomous car, with Ackermann dynamics, was developed last year. Another goal of this GSoC project is to improve the simulated car model to include onboard a LIDAR sensor, making it even more realistic and similar to Waymo autonomous cars in real life.

- Skills required/preferred: Gazebo, URDF, ROS2, REACT

- Difficulty rating: easy

- Expected results: updated and operating new release of the Car Junction exercise at RoboticsAcademy

- Expected size: small (~90h)

- Mentors: Apoorv Garg (apoorvgarg.ms AT gmail.com) and David Valladares (d.valladaresvigara AT gmail.com)

Project #9: BT-Studio: a tool for programming robots with Behavior Trees

Brief explanation: Behavior Trees is a recent paradigm for organizing robot deliberation. There are brilliant open source projects such as BehaviorTrees.CPP and PyTrees. Our BT-Studio has been created to provide a web based graphical editor of Behavior Trees. It includes a conversor to Python language and the dockerized execution of the generated robotics application from the browser.

The goal of this GSoC proposed project is to develop a Behavior Tree library and a collection of example robotics applications.

- Skills required/preferred: Python, ROS, Gazebo

- Difficulty rating: medium

- Expected results: Behavior Trees library for BT-Studio and several example robotics applications developed with them.

- Expected size: 175h

- Mentors: José M. Cañas (josemaria.plaza AT gmail.com) and Luis R. Morales (lr.morales.iglesias AT gmail.com)

Project #10: End-to-end autonomous vehicle driving based on text-based instructions: research project regarding Autonomous Driving + LLMs

Brief explanation: In previous editions of GSoC, we have explored Behavior Metrics as a tool for evaluation of end-to-end autonomous driving models. We have also explored optimization of end-to-end models and even navigation based on segmented images and commands (see video and project link). In this project, we would like to combine the previous acquired knowledge with LLMs, using the projects that we previously developed successfully on GSoC. The idea is to integrate an LLM system with an end-to-end autonomous driving driving model so the user can give commands in text mode directly to the vehicle, as you’d do when using a real-life taxi (“please, stop here”, “please, turn to the left in the following intersection”). This idea has been already explored in research projects, and we will be replicating it (see references).

- Skills required/preferred: Python and deep learning knowledge (Python/PyTorch/transformers)

- Difficulty rating: hard

- Expected results: end-to-end model that drives the vehicle using text-based instructions provided by an LLM.

- Expected size: 350h

- Mentors: Sergio (sergiopaniegoblanco AT gmail.com), Nikhil Paliwal ( nikhil.paliwal14 AT gmail.com ), David Pérez (david.perez.saura AT upm.es) and Qi Zhao (skyler.zhaomeiqi AT gmail.com)

References:

- https://github.com/opendilab/LMDrive

- https://arxiv.org/pdf/2311.14786.pdf

- https://github.com/wayveai/Driving-with-LLMs

Project #11: Robotics Academy: LLMs Assisted Tutor & Tester

Brief explanation: Include an AI based Tutor that can help with concrete questions in Robotics Academy and can analyze submissions for finding malicious code or unappropriate contents. The backend LLM will receive requests through their API.

- Skills required/preferred: Docker, Python, Javascript,

- Difficulty rating: medium

- Expected results: An integrated “tutor” that can solve questions regarding the problem and an Autonomous Tester for checking submissions and detecting possible miss-behaving.

- Expected size: 175h

- Mentors: Miguel Fernández (miguel.fernandez.cortizas AT upm.es) and Pedro Arias (pedro.ariasp AT upm.es)

Application instructions for GSoC-2024

We welcome students to contact relevant mentors before submitting their application into GSoC official website. If in doubt for which project(s) to contact, send a message to jderobot AT gmail.com We recommend browsing previous GSoC student pages to look for ready-to-use projects, and to get an idea of the expected amount of work for a valid GSoC proposal.

Requirements

- Git experience

- C++ and Python programming experience (depending on the project)

Programming tests

| Project | #1 | #2 | #3 | #4 | #5 | #6 | #7 | #8 | #9 | #10 | #11 |

| Academy (A) | X | X | X | X | X | X | O | X | X | X | X |

| Python (B) | X | X | X | X | X | X | X | X | X | X | X |

| ROS2 (C) | X | X | O | X | X | X | X | - | - | X | * |

| React (D) | O | O | X | O | O | X | * | - | - | O | O |

| Where: | |

| * | Not applicable |

| X | Mandatory |

| O | Optative |

Before accepting any proposal all candidates have to do these programming challenges:

- (A) RoboticsAcademy challenge

- (B) Python challenge

- (C) ROS2 challenge

- (D) React challenge

Send us your information

AFTER doing the programming tests, fill this web form with your information and challenge results. Then you are invited to ask the project mentors about the project details. Maybe we will require more information from you like this:

- Contact details

- Name and surname:

- Country:

- Email:

- Public repository/ies:

- Personal blog (optional):

- Twitter/Identica/LinkedIn/others:

- Timeline

- Now split your project idea in smaller tasks. Quantify the time you think each task needs. Finally, draw a tentative project plan (timeline) including the dates covering all period of GSoC. Don’t forget to include also the days in which you don’t plan to code, because of exams, holidays etc.

- Do you understand this is a serious commitment, equivalent to a full-time paid summer internship or summer job?

- Do you have any known time conflicts during the official coding period?

- Studies

- What is your School and degree?

- Would your application contribute to your ongoing studies/degree? If so, how?

- Programming background

- Computing experience: operating systems you use on a daily basis, known programming languages, hardware, etc.

- Robot or Computer Vision programming experience:

- Other software programming:

- GSoC participation

- Have you participated to GSoC before?

- How many times, which year, which project?

- Have you applied but were not selected? When?

- Have you submitted/will you submit another proposal for GSoC 2024 to a different org?

Previous GSoC students

- Pawan Wadhwani (GSoC-2023) Robotics Academy: migration to ROS2 Humble

- Meiqi Zhao (GSoC 2023) Obstacle Avoidance for Autonomous Driving in CARLA Using Segmentation Deep Learning Models

- Siddheshsingh Tanwar (GSoC 2023) Dockerization of Visual Circuit

- Prakhar Bansal (GSoC 2023) RoboticsAcademy: Cross-Platform Desktop Application using ElectronJS

- Apoorv Garg (GSoC-2022) Improvement of Web Templates of Robotics Academy exercises

- Toshan Luktuke (GSoC-2022) Improvement of VisualCircuit web service

- Nikhil Paliwal(GSoC-2022) Optimization of Deep Learning models for autonomous driving

- Akshay Narisetti(GSoC-2022) Robotics Academy: improvement of autonomous driving exercises

- Prakarsh Kaushik(GSoC-2022) Robotics Academy: consolidation of drone based exercises

- Bhavesh Misra (GSoC-2022) Robotics Academy: improve Deep Learning based Human Detection exercise

- Suhas Gopal (GSoC-2021) Shifting VisualCircuit to a web server

- Utkarsh Mishra (GSoC-2021) Autonomous Driving drone with Gazebo using Deep Learning techniques

- Siddharth Saha (GSoC-2021) Robotics Academy: multirobot version of the Amazon warehouse exercise in ROS2

- Shashwat Dalakoti (GSoC-2021) Robotics-Academy: exercise using Deep Learning for Visual Detection

- Arkajyoti Basak (GSoC-2021) Robotics Academy: new drone based exercises

- Chandan Kumar (GSoC-2021) Robotics Academy: Migrating industrial robot manipulation exercises to web server

- Muhammad Taha (GSoC-2020) VisualCircuit tool, digital electronics language for robot behaviors.

- Sakshay Mahna (GSoC-2020) Robotics-Academy exercises on Evolutionary Robotics.

- Shreyas Gokhale (GSoC-2020) Multi-Robot exercises for Robotics Academy In ROS2.

- Yijia Wu (GSoC-2020) Vision-based Industrial Robot Manipulation with MoveIt.

- Diego Charrez (GSoC-2020) Reinforcement Learning for Autonomous Driving with Gazebo and OpenAI gym.

- Nikhil Khedekar (GSoC-2019) Migration to ROS of drones exercises on JdeRobot Academy

- Shyngyskhan Abilkassov (GSoC-2019) Amazon warehouse exercise on JdeRobot Academy

- Jeevan Kumar (GSoC-2019) Improving DetectionSuite DeepLearning tool

- Baidyanath Kundu (GSoC-2019) A parameterized automata Library for VisualStates tool

- Srinivasan Vijayraghavan (GSoC-2019) Running Python code on the web browser

- Pankhuri Vanjani (GSoC-2019) Migration of JdeRobot tools to ROS 2

- Pushkal Katara (GSoC-2018) VisualStates tool

- Arsalan Akhter (GSoC-2018) Robotics-Academy

- Hanqing Xie (GSoC-2018) Robotics-Academy

- Sergio Paniego (GSoC-2018) PyOnArduino tool

- Jianxiong Cai (GSoC-2018) Creating realistic 3D map from online SLAM result

- Vinay Sharma (GSoC-2018) DeepLearning, DetectionSuite tool

- Nigel Fernandez GSoC-2017

- Okan Asik GSoC-2017, VisualStates tool

- S.Mehdi Mohaimanian GSoC-2017

- Raúl Pérula GSoC-2017, Scratch2JdeRobot tool

- Lihang Li: GSoC-2015, Visual SLAM, RGBD, 3D Reconstruction

- Andrei Militaru GSoC-2015, interoperation of ROS and JdeRobot

- Satyaki Chakraborty GSoC-2015, Interconnection with Android Wear

How to increase your chances of being selected in GSoC-2024

If you put yourself in the shoes of the mentor that should select the student, you’ll immediately realize that there are some behaviors that are usually rewarded. Here’s some examples.

-

Be proactive: Mentors are more likely to select students that openly discuss the existing ideas and / or propose their own. It is a bad idea to just submit your idea only in the Google web site without discussing it, because it won’t be noticed.

-

Demonstrate your skills: Consider that mentors are being contacted by several students that apply for the same project. A way to show that you are the best candidate, is to demonstrate that you are familiar with the software and you can code. How? Browse the bug tracker (issues in github of JdeRobot project), fix some bugs and propose your patch submitting your PullRequest, and/or ask mentors to challenge you! Moreover, bug fixes are a great way to get familiar with the code.

-

Demonstrate your intention to stay: Students that are likely to disappear after GSoC are less likely to be selected. This is because there is no point in developing something that won’t be maintained. And moreover, one scope of GSoC is to bring new developers to the community.

RTFM

Read the relevant information about GSoC in the wiki / web pages before asking. Most FAQs have been answered already!

- Full documentation about GSoC on official website.

- FAQ from GSoC web site.

- If you are new to JdeRobot, take the time to familiarize with the JdeRobot.