GSoC 2020

JdeRobot is a software toolkit for developing robotics and computer vision applications. These domains include sensors (for instance, cameras), actuators, and intelligent software in between. It is mostly written in C++, Python and JavaScript languages and provides a distributed component-based programming environment where the application program is made up of a collection of several concurrent asynchronous components. They may run in different computers and are connected using ICE communication middleware or ROS messages. Components interoperate through explicit interfaces. Each one may have its own independent Graphical User Interface or none at all. It is ROS-friendly and full compatible with ROS-Melodic (and Gazebo9)

Selected students

In the year 2020, the students selected for the Google Summer of Code have been the following:

Ideas list

JdeRobot simplifies the access to hardware devices from the control program. Getting sensor measurements is as simple as calling a local function, and ordering motor commands as easy as calling another local function. The platform attaches those calls to the remote invocation on the components connected to the sensor or the actuator devices. They can be connected to real sensors and actuators or simulated ones, both locally or remotely using the network. Those functions build the API for the Hardware Abstraction Layer. The robotic application get the sensor readings and order the actuator commands using it to unfold its behavior. Several driver components have been developed to support different physical sensors, actuators and simulators. The drivers are used as components installed at will depending on your configuration. They are included in the official release.

This open source project needs further development in these topics:

Project #1: Robotics Academy: new computer vision exercises

Brief Explanation: JdeRobot’s Robotics-Academy is a framework for learning robotics and computer vision. It consists of a collection of robot programming exercises using Python language where the student has to code the behavior of a given (either simulated or real) robot to fit some task related to robotics or computer vision. It uses standard middleware and libraries as ROS and openCV, so the student learn the state of the art tools.

Currently there are many robotics exercises but few Computer Vision ones. Just a Color Filter and a 3D reconstruction exercise. The goal of this project is to design and program several Computer Vision exercises in JdeRobot Academy which cover an image processing introductory course using real cameras. It may take the available OpenCVdemo component as starting point.

ROS2 use for these exercises will be explored.

- Expected results: ROS-based exercises involving Computer Vision

- Knowledge Prerequisite: Python programming skills, OpenCV library.

- Mentors: Eduardo Perdices (edupergar AT gmail.com) and Ignacio Arranz (n.arranz.agueda AT gmail.com)

Project #2: Robotics Academy: multirobot version of the Amazon warehouse exercise in ROS2

Brief Explanation: Swarm robots have gained the importance in the domain of Intelligent Systems with their applications being extended to warehouses. One such popular multi-robot systems could be found in Amazon warehouses, from where this project borrows its inspiration. The goal of this project is to build a multi-robot navigation exercise extending the already existing Amazon warehouse exercise (demonstrated in video) to a fleet of robots and migrating its infrastructure to ROS2.

ROS2 has brought out several improvements over ROS with changes in middleware and software architecture in many aspects and one of its target applications is multi-robot agents. In this project, we would focus on developing new exercises with ros2 for multi robots (2 or 3 robots for the beginning). The Navigation stack of ROS2 brings with it, better features to implement control of a swarm of robots. It now supports having multiple robots in the same ROS domain navigating independently. The idea is to use the ROS2 navigation stack for the control system and planning and create new robotics exercises for JdeRobot Academy. It will involve using functionalities like collision avoidance between robots, global path planning, and Multi-robot coordination for developing application based exercises.

- Expected results: A new multirobot exercise in JdeRobot-Academy

- Knowledge Prerequisite: C++, Python programming skills, experience with ROS.

- Good to know:* ROS2

- Mentors: Pankhuri Vanjani (16uec069 AT lnmiit.ac.in) and David Pascual (d.pascualhe AT gmail.com).

Project #3: Robotics-Academy: Robotic arm manipulation with moveit!

Brief Explanation: JdeRobot’s Robotics-Academy is an open-source framework for learning robotics and computer vision. It consists of a collection of robot programming exercises using Python language, where the student has to code the behavior of a given (either simulated or real) robot to fit some task related to robotics or computer vision. It is compatible with the ROS middleware and the Gazebo simulator.

At the moment, Robotics Academy is almost fully oriented to service robotics. Then, the idea for this project is to expand it towards autonomous robotic manipulation using robotic arms and cobots (collaborative robots designed to interact with humans). For that purpose, the GSoC student will develop a collection of exercises of progressive complexity, ranging from the classical pick-n-place using standard ABB, Kuka or Fanuc industrial robotic arms to more complex sorting and manipulation fully-automated tasks, including 3D perception and collision checking. These last exercises will contain vision-assisted cobots and/or arm manipulators attached to mobile AGVs. Realistic environments including industrial work cells and kitting warehouse scenarios will also be developed for the Gazebo simulator.

Together with ROS and Gazebo, MoveIt! will be used as main working framework for the exercises. MoveIt! is an open source software running on top of ROS that provides interaction, visualization, planning and control functionalities for robotic manipulators. As it is already being ported to ROS2 (as MoveIt! 2), at the end of the project we will explore the migration of the exercises to ROS2 using MoveIt!2.

This proposal for diversifying our Robotics-Academy matches well with the increasing interest in extending the ROS capabilities to advanced manipulation and manufacturing. Clear examples of it are the ROS Industrial project, ROS-based competitions as the ARIAC series or the RoboND project by udacity . Our preliminar developments in robotic manipulation with ROS and MoveIt! can also be found in the Final Degree thesis of Ignacio Malo.

- Expected results: a set of exercises for robotics arms and gazebo (description + code + worlds).

- Knowledge Prerequisites: python, ROS and gazebo

- Mentors: Diego Martín (diego.martin.martin AT urjc.es)

Project #4: Robotics-Academy: exercises on Evolutionary Robotics

Brief Explanation: JdeRobot’s Robotics-Academy is a framework for learning robotics and computer vision. It consists of a collection of robot programming exercises using Python language where the student has to code the behavior of a given (either simulated or real) robot to fit some task related to robotics or computer vision. It is ROS and Gazebo based.

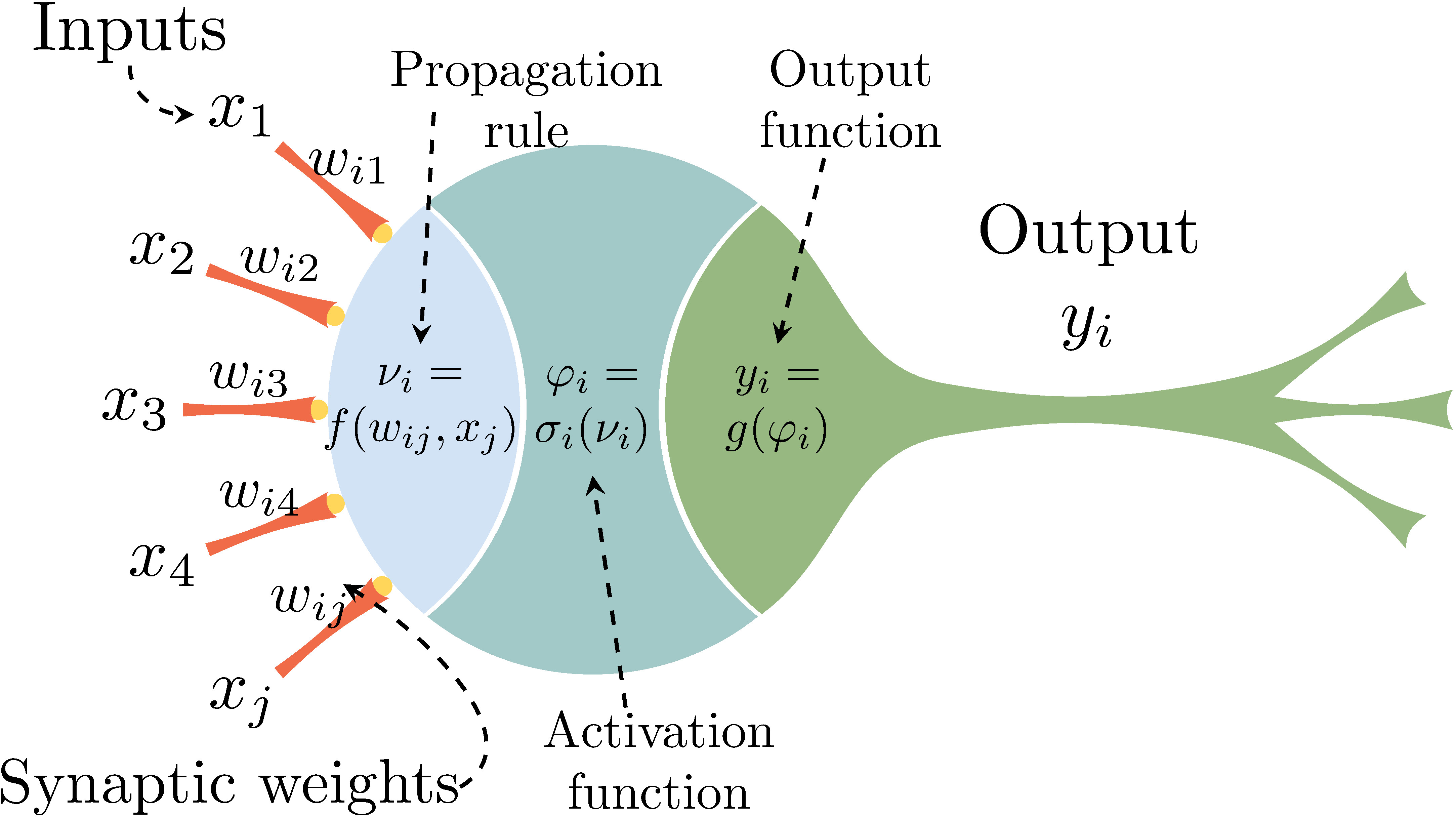

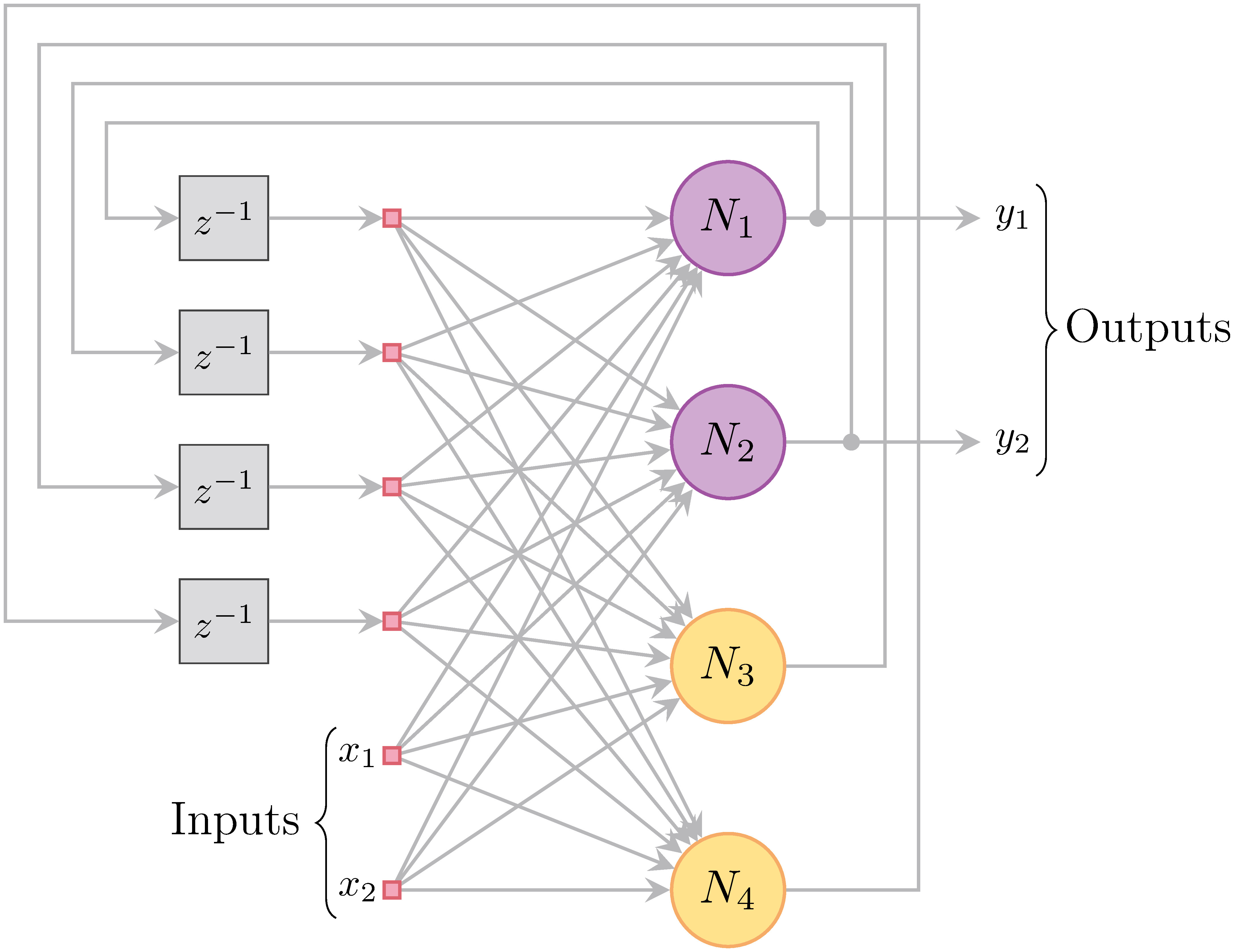

The idea for this project is to introduce Evolutionary Robotics into Robotics-Academy developing exercises with mobile robots. At the base level, we will be implementing static (e.g. MLP) and time dependent neural networks (e.g. CTRNN). Moreover, basic genetic algorithms should be implemented for its usage in Robotics-Academy; at least, simple selection, crossover, mutation and elites will be included in the development. At the user level, we will be implementing an API which allows the generation of the neural network morphology, connections and weight, thresholds and gains boundaries. Input networks should be connected to sensors and output networks to the actuators; its definition will also be in the API.

- Expected results: a set of exercises for evolutionary robotics (description + code + worlds).

- Knowledge Prerequisite: C++, Python and ROS.

- Mentors: Álvaro Gutierrez ( a.gutierrez AT upm.es ) and Ignacio Arranz (n.arranz.agueda AT gmail.com)

Project #5: Improving VisualStates tool with ROS2 support and web-based GUI

Brief Explanation: VisualStates is a tool for programming robot behaviors using automata. It combines a graphical language to specify the states and the transitions with a text language (Python or C++). It generates a ROS node which implements the automata and shows a GUI at the runtime with the active state, for debugging. There is always a root state, if a user creates a single level automata behavior, the states defining the behavior are added as children to the root state. Every automata is also a state. Therefore, every automata can be added as a child to any other state. That way, the user is able to compose reactive behavior hierarchies.

The tool provides import functionality, when the user imports an already created automata, the whole one is copied into the current automata. Each hierarchy of behavior contains local namespace for its specific auxiliary code and the overall behavior can access the global namespace which contains the ROS related data and globally accessible auxiliary data.

In the scope of the project, following improvements are targeted:

-

ROS2 support: ROS2 is a complete rewrite of the original ROS robotic middleware, and addresses some major bottlenecks in ROS 1 leading to a major architecture change. As these versions aren’t directly compatible, in order to benefit from these improvements the option of generating ROS 2 nodes instead is desirable.

-

Web-based GUI: Currently the GUI used by the tool is Qt-based and, while Qt allows cross-system compatibility as there is support in multiple systems for this library, new Web-based technologies simplifies this task and adds other future desirable aspects of the GUI. We would like to study and reimplement the GUI in one of these web-based technologies.

- Expected results: ROS2 node generation feature and new web-based GUI VisualStates tool.

- Knowledge Prerequisite: Python and C++ programming skills. Web technologies (knowledge of HTML, javascript/nodejs at least)

- Mentors: Luis Roberto Morales (lr.morales.iglesias AT gmail.com), Jose M. Cañas (josemaria.plaza AT gmail.com)

Project #6: Python robot applications in the web browser: extending the PyOnBrowser converter to JS

Brief Explanation: WebSim2D is a web-based robot simulator which accepts JavaScript programming of its robots. We are working on allowing the programming of such robots in Python, directly from the browser. In GSoC-2019 we developed the first release of the PyOnBrowser converter which translates from Python to JavaScript and supports main Python language features such as Arithmetic expressions, Variable declarations, Conditional statements, While and For loops and Functions. It is based in Abstract Syntax Trees library. More details of such project can be found at Srinivasan’s blog. For 2020 we aim to extend the Python coverage of such converter including support for strings, classes, standard math, iterators, etc. In addition, automatic tests should be included to improve the quality of each accepted contribution.

Running Python robot applications in the web browser is awesome! and opens the door to learn robotics in a multiplatform way (Linux, Windows, MacOS).

- Expected results: Python applications running inside the Websim2D robot simulator in the browser.

- Knowledge Prerequisite: Python, JavaScript, compilers.

- Mentors: Gorka Guardiola Múzquiz (gorka.guardiola AT urjc.es) and Srinivasan Vijayraghavan (Srinivasan.Vijayraghavan AT iiitb.org )

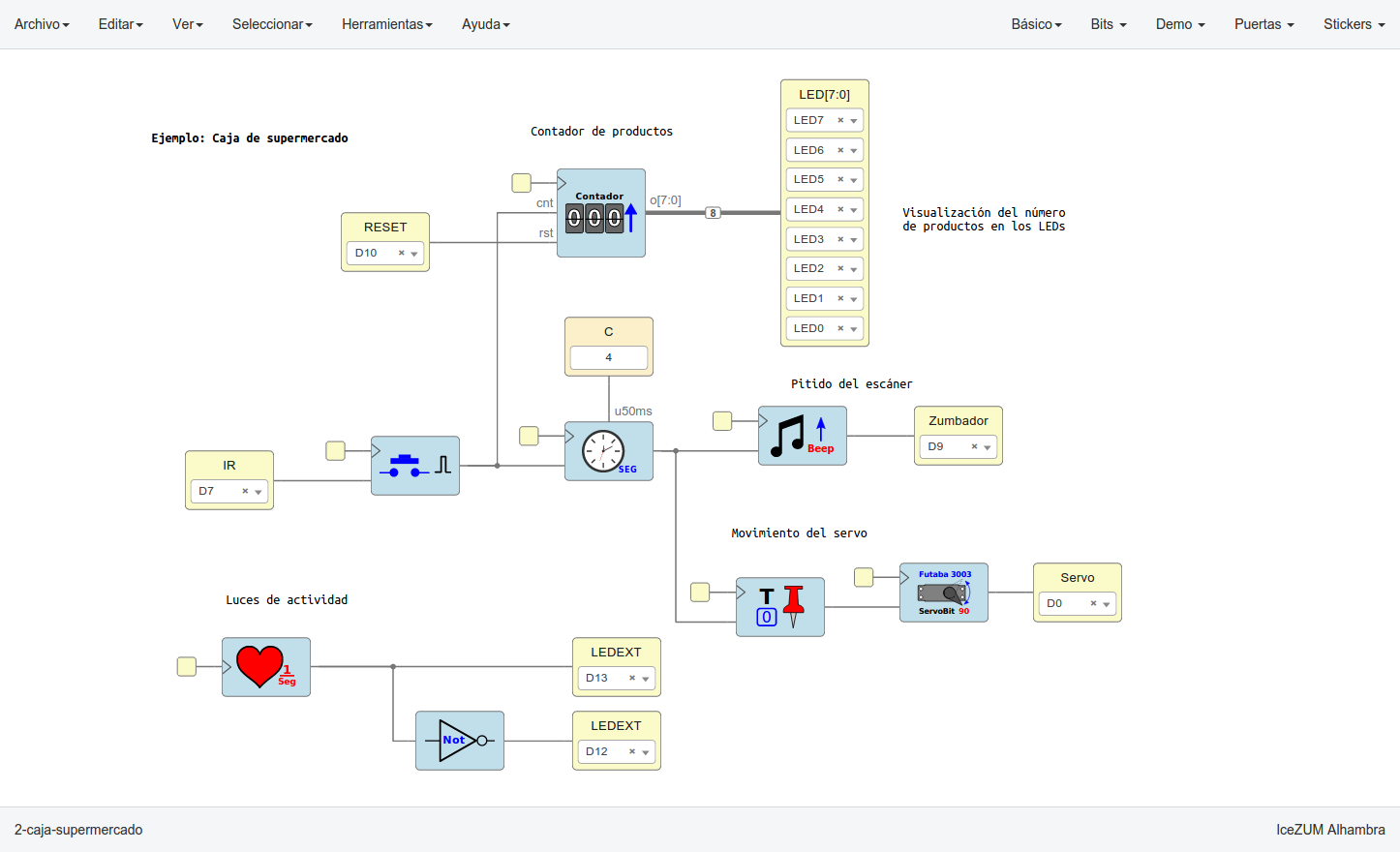

Project #7: VisualCircuit tool, digital electronics language for robot behaviors

Brief Explanation: In reconfigurable circuits (FPGAs) a hardware description language is used to visually specify the circuit configuration and its behavior. For instance, the open source IceStudio tool uses such visual language to configure FPGAs. The idea of this project is to explore the use of the same visual language to program reactive robot behaviors. There are blocks (existing circuits) and wires to connect their inputs and outputs. Instead of synthesizing the visual program into a FPGA implementation the goal is to synthesize it into a Python program. Each block is translated into a thread that runs a transforming function at fast iterations. Each iteration reads the block inputs, does some specific processing to compute the right values and writes them on its outputs. Each wire is translated into a shared variable where the blocks can write or read.

- Expected results: a new tool to program reactive robot behaviors using a visual language based on blocks and wires.

- Knowledge Prerequisite: Python programming skills.

- Mentors: Eduardo Perdices (edupergar AT gmail.com) and JoséMaría Cañas (josemaria.plaza AT urjc.es).

Project #8: Reinforcement Learning for Autonomous Driving with Gazebo and OpenAI gym

Brief Explanation: OpenAI gym is a toolkit for developing and comparing reinforcement learning algorithms. Gazebo is a well-known really powerful robotics simulator that can help testing different algorithms. Both tools are widely used by the community.

In this project, we would like to combine these tools to develop an autonomous driving robot that learns using state of the art reinforcement learning (RL) techniques. The robot would learn how to drive using different scenarios and conditions basing the learning on RL techniques. Work has already been tested on autonomous driving robots using different learning techniques like a classification neural networks or regression neural networks, so this project aims to compare those already experimented techniques with RL, understanding how each one performs, looking for weaknesses and advantages. In the first two videos, the already studied techniques for autonomous driving are shown. In the following videos, different examples of RL algorithms developed using OpenAI gym are displayed.

- Expected results: RL autonomous driving robot using Gazebo and OpenAI gym.

- Knowledge Prerequisites: Python and C++ programming skills.

- Mentors: Sergio Paniego Blanco (sergiopaniegoblanco AT gmail.com) and David Pascual (d.pascualhe AT gmail.com)

Application instructions for GSoC-2020

We welcome students to contact relevant mentors before submitting their application into GSoC official website. If in doubt for which project(s) to contact, send a message to jderobot AT gmail.com We recommend browsing previous GSoC student pages to look for ready-to-use projects, and to get an idea of the expected amount of work for a valid GSoC proposal.

Requirements

- Git experience

- C++ and Python programming experience (depending on the project)

Programming tests

| Project | #1 | #2 | #3 | #4 | #5 | #6 | #7 | #8 | #9 |

| Academy (A) | X | X | X | X | X | X | X | X | * |

| C++ (B) | O | X | O | X | X | O | O | X | * |

| Python (C) | X | X | X | X | X | X | X | X | * |

| Industrial Robots (D) | * | * | O | * | * | * | * | * | * |

| Where: | |

| * | Not applicable |

| X | Mandatory |

| O | Optative |

Before accepting any proposal all candidates have to do these programming challenges:

- (A) Academy challenge

- (B) C++ challenge

- (C) Python challenge

- (D) Industrial robots challenge

Send us your information

AFTER doing the programming tests, fill this web form with your information and challenge results. Then you are invited to ask the project mentors about the project details. Maybe we will require more information from you like this:

- Contact details

- Name and surname:

- Country:

- Email:

- Public repository/ies:

- Personal blog (optional):

- Twitter/Identica/LinkedIn/others:

- Timeline

- Now split your project idea in smaller tasks. Quantify the time you think each task needs. Finally, draw a tentative project plan (timeline) including the dates covering all period of GSoC. Don’t forget to include also the days in which you don’t plan to code, because of exams, holidays etc.

- Do you understand this is a serious commitment, equivalent to a full-time paid summer internship or summer job?

- Do you have any known time conflicts during the official coding period?

- Studies

- What is your School and degree?

- Would your application contribute to your ongoing studies/degree? If so, how?

- Programming background

- Computing experience: operating systems you use on a daily basis, known programming languages, hardware, etc.

- Robot or Computer Vision programming experience:

- Other software programming:

- GSoC participation

- Have you participated to GSoC before?

- How many times, which year, which project?

- Have you applied but were not selected? When?

- Have you submitted/will you submit another proposal for GSoC 2017 to a different org?

Previous GSoC students

- Nikhil Khedekar (GSoC-2019) Migration to ROS of drones exercises on JdeRobot Academy

- Shyngyskhan Abilkassov (GSoC-2019) Amazon warehouse exercise on JdeRobot Academy

- Jeevan Kumar (GSoC-2019) Improving DetectionSuite DeepLearning tool

- Baidyanath Kundu (GSoC-2019) A parameterized automata Library for VisualStates tool

- Srinivasan Vijayraghavan (GSoC-2019) Running Python code on the web browser

- Pankhuri Vanjani (GSoC-2019) Migration of JdeRobot tools to ROS 2

- Pushkal Katara (GSoC-2018) VisualStates tool

- Arsalan Akhter (GSoC-2018) Robotics-Academy

- Hanqing Xie (GSoC-2018) Robotics-Academy

- Sergio Paniego (GSoC-2018) PyOnArduino tool

- Jianxiong Cai (GSoC-2018) Creating realistic 3D map from online SLAM result

- Vinay Sharma (GSoC-2018) DeepLearning, DetectionSuite tool

- Nigel Fernandez GSoC-2017

- Okan Asik GSoC-2017, VisualStates tool

- S.Mehdi Mohaimanian GSoC-2017

- Raúl Pérula GSoC-2017, Scratch2JdeRobot tool

- Lihang Li: GSoC-2015, Visual SLAM, RGBD, 3D Reconstruction

- Andrei Militaru GSoC-2015, interoperation of ROS and JdeRobot

- Satyaki Chakraborty GSoC-2015, Interconnection with Android Wear

How to increase your chances of being selected in GSoC-2020

If you put yourself in the shoes of the mentor that should select the student, you’ll immediately realize that there are some behaviors that are usually rewarded. Here’s some examples.

-

Be proactive: Mentors are more likely to select students that openly discuss the existing ideas and / or propose their own. It is a bad idea to just submit your idea only in the Google web site without discussing it, because it won’t be noticed.

-

Demonstrate your skills: Consider that mentors are being contacted by several students that apply for the same project. A way to show that you are the best candidate, is to demonstrate that you are familiar with the software and you can code. How? Browse the bug tracker (issues in github of JdeRobot project), fix some bugs and propose your patch submitting your PullRequest, and/or ask mentors to challenge you! Moreover, bug fixes are a great way to get familiar with the code.

-

Demonstrate your intention to stay: Students that are likely to disappear after GSoC are less likely to be selected. This is because there is no point in developing something that won’t be maintained. And moreover, one scope of GSoC is to bring new developers to the community.

RTFM

Read the relevant information about GSoC in the wiki / web pages before asking. Most FAQs have been answered already!

- Full documentation about GSoC on official website.

- FAQ from GSoC web site.

- If you are new to JdeRobot, take the time to familiarize with the JdeRobot.