Program-A-Robot-2018

Introduction

| Program-A-Robot main features |

| Online competition |

| Python |

| Drone programming on your web browser |

| Free |

| Gazebo simulator |

Drones are aerial robots that have gained popularity in recent years. They were born in the military field and with the lowering of their costs have opened up possibilities for commercial use in several civil applications such as infrastructure monitoring, agriculture, surveillance, event recording, etc.. They are robots and so they are composed of sensors, actuators and processors on the hardware side. Its intelligence, however, lies on its software.

The Program-A-Robot competition aims at engineering students, grad, master or PhD students, all over the world and its goal is to foster robotics and computer vision providing nice challenges and an opportunity to measure your solution with other fellow competitors. It was born in 2016 as a robotic competition inside Rey Juan Carlos University (2016, Spanish). The second edition (2017,Spanish) was organized inside the Spanish Jornadas Nacionales de Robótica. This year the third edition will be held as part of the International Conference on Intelligent Robots and Systems (IROS 2018). It will take place in Madrid in October, 1-5 2018.

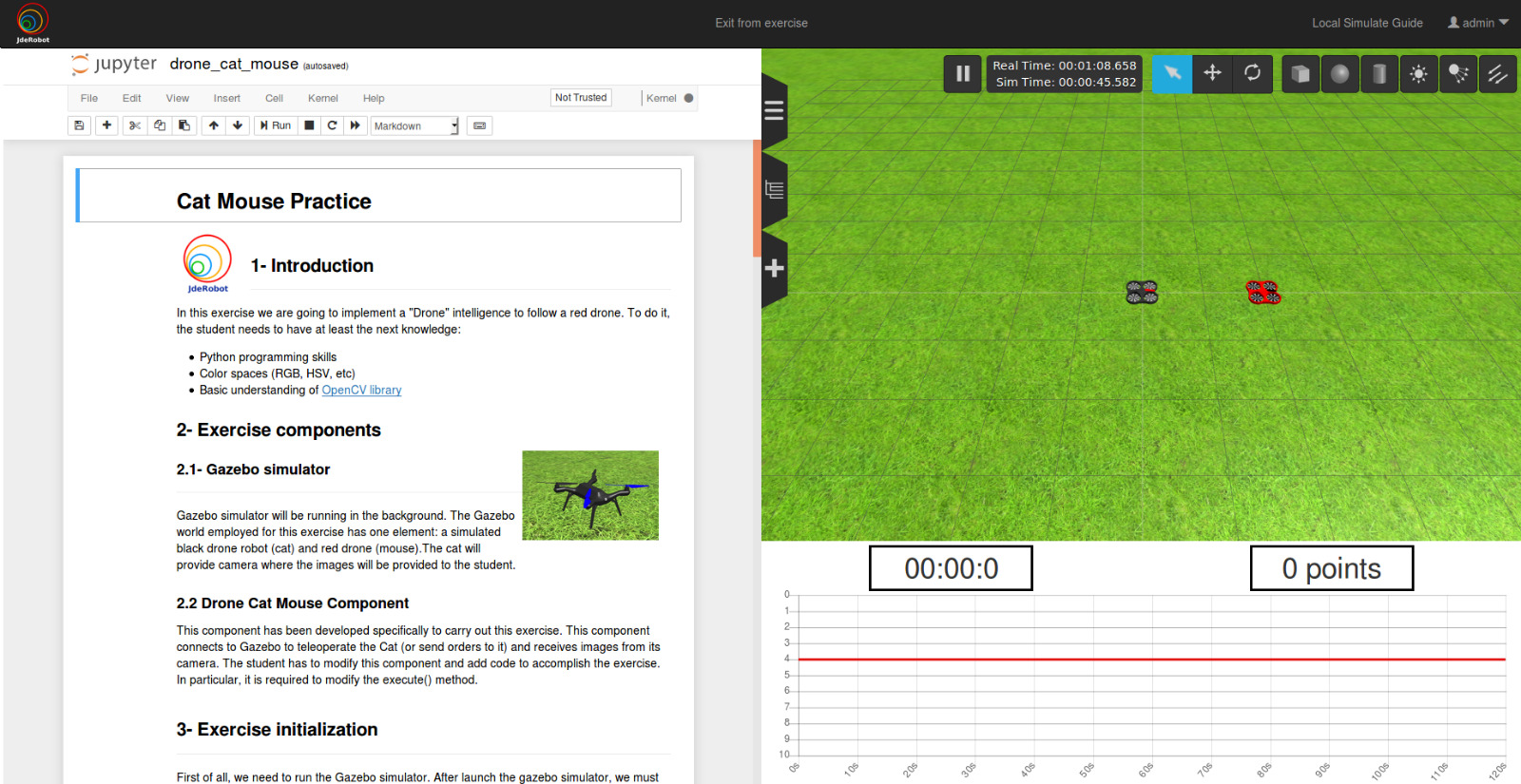

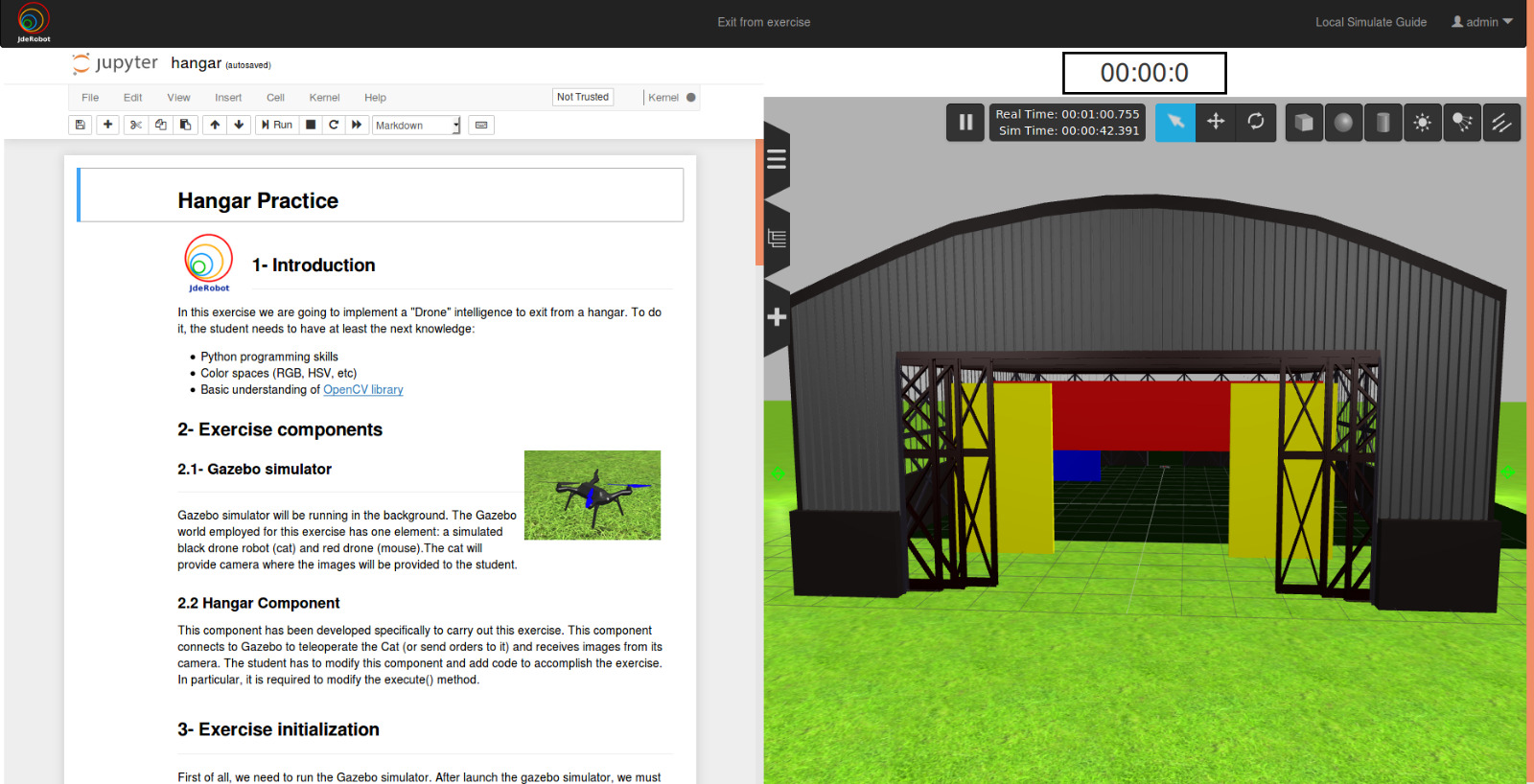

In 2018 edition this competition proposes two challenges the programming the autonomous intelligence of a drone. In the Cat and Mouse challenge the cat drone has to be programmed to search, chase and stay close to another mobile aerial robot (mouse drone). Several autonomous mice are provided by the organization committee. In the Escape from the Hangar challenge the drone will have to take off inside a hangar and get out of the hangar avoiding the collision with moving walls. It is all about programming, about robot programming.

The participants will program in Python language using the Gazebo simulator and the JdeRobot-Academy software platform. A nice and simple infrastructure using docker and a WebIDE has been developed to simplify the robot programming from your web browser.

It is fostered by JdeRobot Foundation and GMV.

Organization and evaluation

Timeline

-

Competition announcement

-

Each participant is required to register individually in the JdeRobot-Academy WebIDE and select the Participate in Program-A-Robot 2018@IROS button.

-

All the participants will develop their code for Cat-Mouse challenge before September 29nd (23:59h GMT).

-

All the participants will develop their code for Escape from the hangar challenge before September 29nd (23:59h GMT).

-

On September 29th the code is automatically submitted to the organizing committee

-

Finals will take place inside IROS October 3rd. The final session can be followed inside IROS and will be also streamed on YouTube Live. If the number of participants allows it, also the qualifying sessions.

Scores

To determine the score of your algorithms we have created two automatic referees which evaluate the performance of your algorithm. The referees are available when preparing your code or training, so you can get your score and improve your algorithms. To be fair, the day of the finals the code from all participants will be run on the same computer from the organizing committee and within the same conditions (starting positions of the drones, behavior of the mice, movement of the obstacle walls in the hangar…).

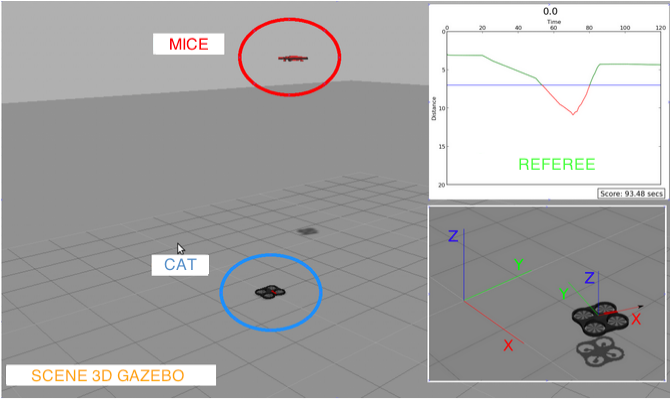

Score in the Cat and Mouse challenge

Each round will take 2 minutes. Your cat robot will start in different relative positions to the autonomous mouse, it has to look for it and chase it. The organizing committee has programmed several different autonomous mice, from those with simple behavior to other more difficult to track. Some of them will be released for training. The automatic referee measures the current instantaneous distance from your cat to the mouse and shows its evolution along the whole round on the screen. When it is below a certain threshold (close-threshold) both the progress line and the background are painted green, and when it is above in red. As long as the cat drone is near the mouse the score will increase. It is forbidden to touch the mouse. Each contact will be penalized in the score.

Score in the Escape from the hangar challenge

Each round will take 1 minute. Your drone will start in a specific area of the hangar and have to escape away from it without hitting any mobile walls, which are moving in a random pattern. The referee measures how many seconds takes your drone to leave the hangar. The initial score starts from 60 points and decreases as long as your drone keeps inside the hangar. It is forbidden to touch the walls. Each contact will be penalized in the score.

Finals

The competition is organised as several consecutive knockout sessions where your drone will gradually face mice which are increasingly more difficult to follow and tighter times to get out of the hangar. Each session is composed of two rounds of the Cat and Mouse and two rounds of the Escape from the Hangar. The scores of all of them are sum up to get the global score for session.

After the first qualifying session (Q1) the best 20 drones will pass to the next session (Q2). After the second session (Q2) the best 10 drones will pass to the last session (Q3). In Q3 the final positions in the competitions are decided. The winner of the competition will be the cat with highest score in Q3, which keeps close to the mouse for the longest time and leaves the hangar in the shortest time, both in a safe way.

The jury will be a committee of robotics experts, which may leave the winner empty if the solution is not of enough quality and disqualify the participants who repeatedly collide with the mouse or the walls inside the hangar. Its decisions are final.

Awards

The Cat and Mouse challenge

This challenge consists of programming the intelligence of a drone we call cat. Its aim is to search, chase and stay close to another similar drone we call mouse. The mouse is red, for an easier detection in the images. A 3D world has been prepared for the exercise in the Gazebo simulator. In this world there will be two robots: the AR.Drone which acts as a mouse (in red color) and the cat drone (in black color). There are no other obstacles nearby in the world but the ground. The following video shows an example cat chasing a mouse:

Your code will be developed inside the corresponding Jupyter notebook cells of JdeRobot-Academy WebIDE. Your code will take the appropriate movement decisions based on the information it receives from the drone sensors. In order to develop your drone software we suggest:

-

First of all it is recommended to implement a method that allows us to detect in the image obtained from the mouse drone, obtaining its position in pixels. Typically the center of mass of the pixels that pass the red filter can be a good approximation of the center of the mouse robot (there are better ones).

-

With the mouse robot detected in the image, the next step is to decide which motion is appropriate to command the cat motors with the sendCMDVel() method. Here are many ways to decide it: case based control, PID control, automata, etc. This is where your skill comes into play.

At the beginning of the notebook, the autonomous mouse will be launched. We have also prepared a training mouse that implements most of the movements that you will find in the competition. The movements of this mouse have an incremental difficulty, starting with very simple movements at minimum speed, continuing with more complex movements at medium speed and ending with even more complex movements at maximum speed.

The Escape from the Hangar challenge

This challenge consists of programming the intelligence of a drone that has to take off and escape from the hangar. A 3D world has been prepared for the exercise in the Gazebo simulator. In this world there will be only one robot: the AR.Drone in black color. There are also six mobile walls as moving obstacles. They follow random patterns and make difficult to leave the hangar. No collision is allowed. The starting point for the drone may be changed during the competition so a pure position based control is not recommmended.

Drone Programming

Your entire program will be in the Jupyter notebook and will have the necessary code to govern the behavior of the quadricopter. The behavior of the drone will typically have a perceptual part and a control part. The perception part collects the sensory data (mainly the camera), analyzes them and extracts information from them. The control part decides which movement is appropriate and issues commands to the robot’s motors.

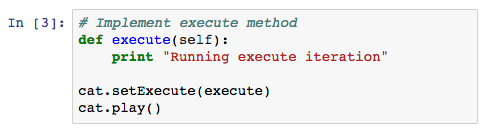

Iterative loop template

A Jupyter notebook and a template are provided so the participant may include her code. The template follows an iterative loop, it continuously performs iterations calling the Execute method. The participant’s perception code and control code should be added on that method. The provided Jupyter notebook uses ROS and ICE interfaces for dialogue with the Gazebo simulator. In particular with the Gazebo plugins that materialize the quadricopter sensors and actuators. All this dialogue is hidden from the programmer, who simply has a few methods in Python as the programming interface for reading data from the camera, GPS sensor and commanding the quadricopter motors.

To insert your code in the cat drone and thus implement your algorithm we recommend the following steps:

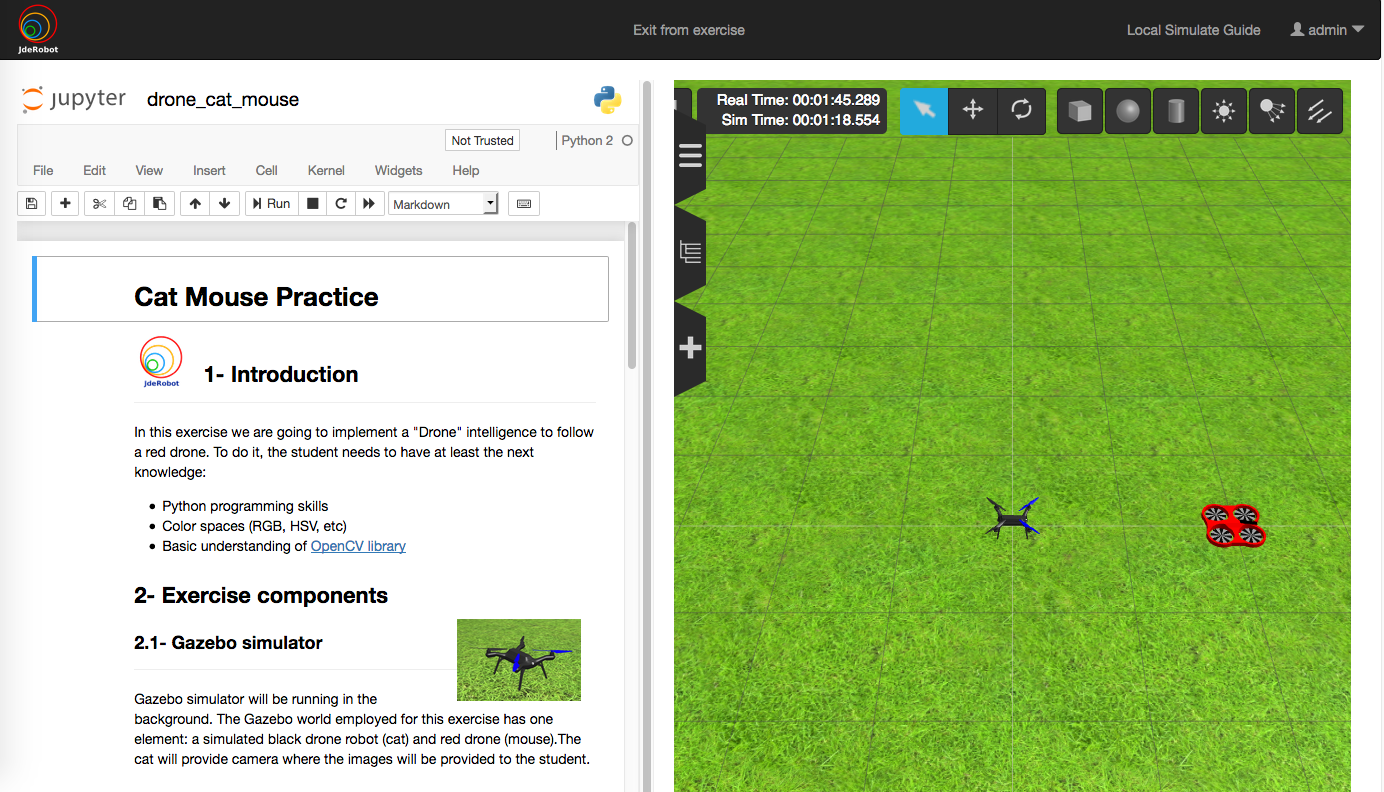

- Open the corresponding exercise at JdeRobot-Academy WebIDE and enter code in the notebook cells where the execute function appears as shown in the image:

- Using the API provided for robot programming and use the available methods to fly the drone, get images, etc..

As sensory equipment the drone has two cameras (one frontal and another facing downwards) and a 3D position sensor (combination of GPS and IMU). Its actuators are the four motors of its propellers. Both the sensors and the actuators can be accessed from the software using the following programming API.

Reading camera images

The images of the front and ventral cameras of the drone are available, but not at the same time. Only one of the cameras can be active. The getImage instruction returns the image from the active drone camera and stores it in the droneImage variable. From this moment on, this variable contains an image that we can treat as we wish.

droneImage = self.sensor.getImage()

To change the active camera the toogleCam method may be used:

self.sensor.toggleCam()

For the Cat and Mouse challenge you will need to detect the mouse robot. The mouse is red. It is typically located very well within the images using a conveniently adjusted HSV filter. The provided Jupyter notebook shows more information about how to obtain camera elements that help detect the detecting. For example, using the OpenCV machine vision library. To change the image inRGB to HSV:

image_HSV = cv2.cvtColor()

Reading 3D position and orientation (GPS+IMU)

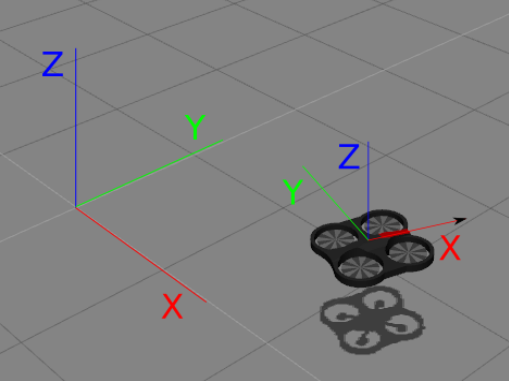

Two reference systems are used on the competition: an absolute system and the robot relative system which is attached to the drone and moves with it. They are shown on this figure:

Mouse tracking can be addressed without knowing the absolute position of the drone in the world. However, it can be useful if you want to optimize your mouse search when your drone does not see the mouse in the image.

The getPose3D method is available to get the 3D position and orientation of the drone in the absolute system. The following instructions get the X, Y, Z current coordinates of the drone in the Gazebo world:

self.sensor.getPose3D().x

self.sensor.getPose3D().y

self.sensor.getPose3D().z

To obtain the 3D orientation of the drone, as a quaternion:

self.sensor.getPose3D().q0

self.sensor.getPose3D().q1

self.sensor.getPose3D().q2

self.sensor.getPose3D().q3

Commanding motion to the motors

To move the drone the sendCMDVel method is used, passing the speed arguments.

self.drone.sendCMDVel(vx,vy,vz,ax,ay,az)

It sends commands for travel speed and rotation speeds. The travel speeds follow the reference system shown in the figure above, in conjunction with the drone itself: vx front/rear, vy left/right and vz up/down. The speed of yaw marks the rotation around a vertical axis, perpendicular to the plane of the drone. The sendCMDVel() method receives 6 parameters: vy, vx, vz, yaw, roll and pitch. Each of the values must be indicated between -1 and 1. The roll and pitch values have no effect on the simulated world of Gazebo

For example, the following command commands the drone to immediately move forward at a speed of 0.5 (at half power). This order is active until you are instructed otherwise.

self.drone.sendCMDVel(0,0.5,0,0,0,0)

The sendCMDVel() method allows you to command different commands at the same time. The following command causes the drone to move forward at a speed of 0.5, move to the right at 0.4 and simultaneously rise on the Z-axis to 0.2 and rotate on the Z-axis at a speed of 0.1.

self.drone.sendCMDVel(-0.4,0.5,0.2,0.1,0,0)

Finally, the following instruction can be used to stop the movement of the drone.

self.drone.sendCMDVel(0,0,0,0,0,0)

Software infrastructure

The required installation to have the competition environment running on your machines is minimal, just Docker and a web browser. One of the great advantages of using this infrastructure is the possibility of running the same infrastructure on Linux, Windows and MacOSX machines.

-

Install docker on your machine and download the official image of the Program-A-Robot-2018 competition

-

Register yourself into JdeRobot-Academy WebIDE

-

Program and test your code

Docker container

It will only be necessary to install docker, download the image that contains all the infrastructure and open in your router (if you work behind a private network) port 8888, which will be the access point between the application and the JdeRobot environment.

- In Ubuntu-Linux

sudo apt install docker.io

To download the docker image you have to type the command by console:

sudo docker pull jderobot/academy-simulation

JdeRobot-Academy WebIDE

Sign up

To register into JdeRobot-Academy WebIDE just go to its web page and click on the upper right corner “Exercises”, and then on the “sign up” text. Once registered in the WebIDE, you will have full access to prepare your challenges for half a year.

Developing code for the challenges and testing it

To launch the challenge you want to work on you will have to:

-

Login into the JdeRobot-Academy web.

-

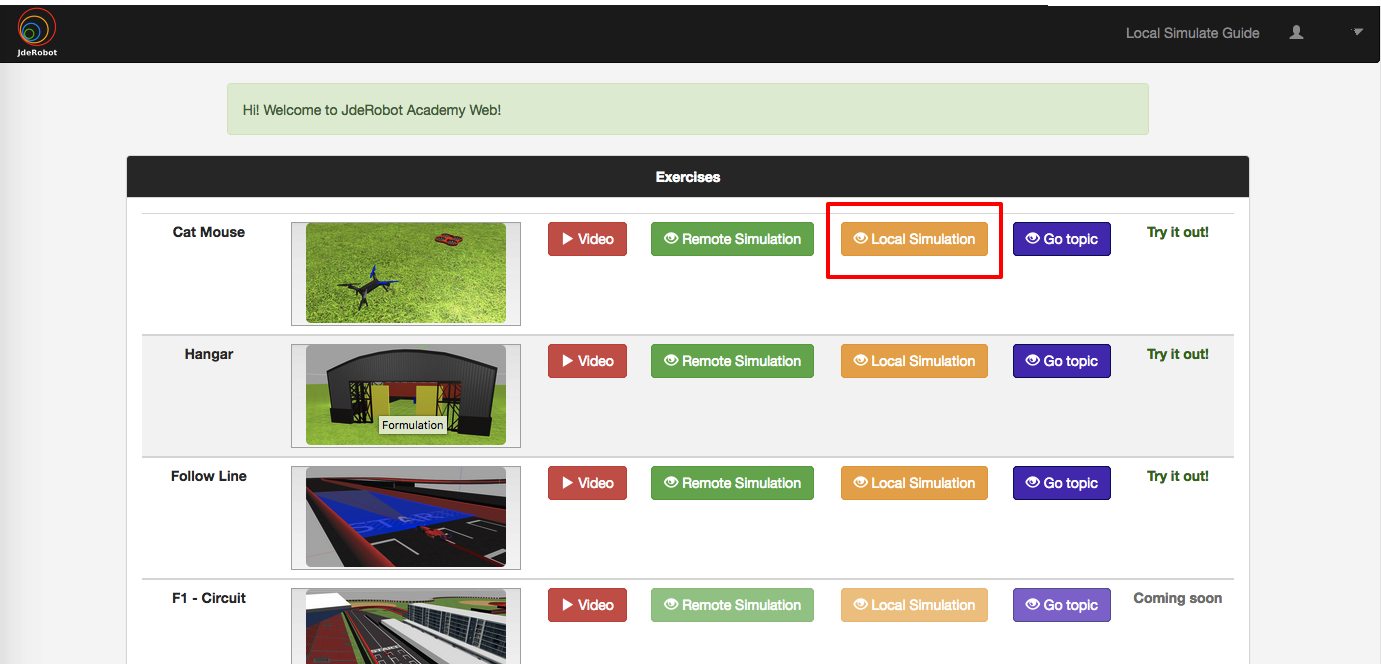

Go to ‘Exercises’, it will lead to a page with the two challenges of the competition are displayed.

-

Select the challenge you want to work on (

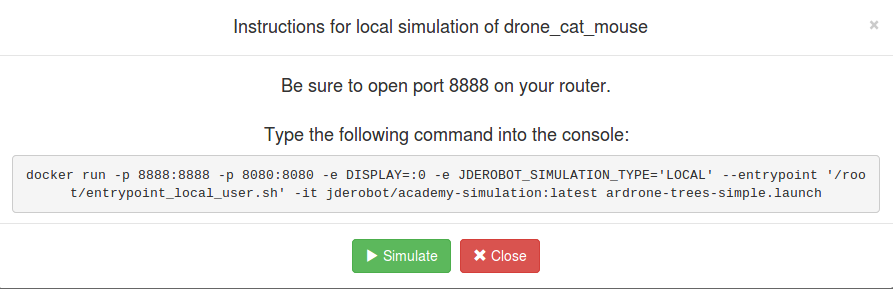

Cat and Mouse or Escape from Hangar) by clicking on the corresponding Local Simulation button. -

Execute the command that starts the corresponding

Dockercontainer.

- For the Cat and Mouse challenge:

docker run -p 8888:8888 -p 8080:8080 -e DISPLAY=:0 -e JDEROBOT_SIMULATION_TYPE='LOCAL' --entrypoint '/root/entrypoint_local_user.sh' -it jderobot/academy-simulation:latest ardrone-trees-simple.launch

- For the Escape from the Hangar challenge:

docker run -p 8888:8888 -p 8080:8080 -e DISPLAY=:0 -e JDEROBOT_SIMULATION_TYPE='LOCAL' --entrypoint '/root/entrypoint_local_user.sh' -it jderobot/academy-simulation:latest hangar.launch

-

Insert your code in the appropriate

Jupyternotebook cell, just in your browser -

Run your code and check results.

Once the simulation is loaded, two columns will appear on the site. On the left and using Jupyter notebook as the environment, some theoretical advises and the code fragments that each participant has to fill in. On the right, the Gazebo simulator, which will respond to the commands you enter in the notebook column.

Frequently Asked Questions

May I participate without attending IROS?

Yes. This a full online and free competition. It can also be followed on YouTube Live. In addiiton, as participant, you are invited to follow the championship in person for free, at IROS place in Madrid.

Who can participate?

Anybody, it’s an open competition. It is mainly aimed at university engineering students from all over the world (undergraduate, master, PhD) but it is open to anybody.

May I use other middleware or language to program the drone?

Not for this competition. Beyond the championship, use whatever you want :-). To make the comparison all participants fair they will use the same version of the Gazebo simulator, Python language and the same JdeRobot-Academy WebIDE.

Does the competition infrastructure only work for Linux?

No, it has been tested and works on Linux, Microsoft-Windows and MacOS computers.

Support

For comments and doubts about the competition and the participation please go to the web forum.